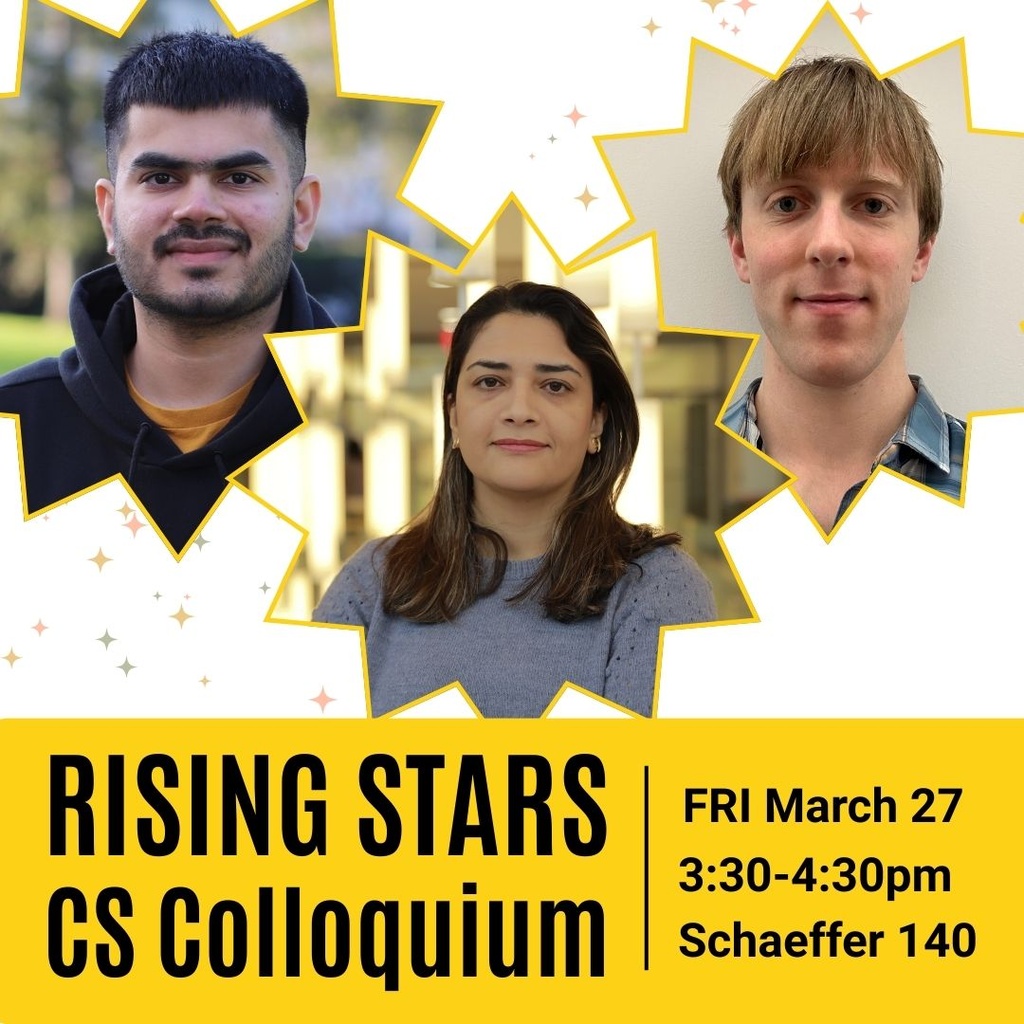

Join us Friday, March 27th from 3:30-4:30 pm for a very special CS Colloquium. We'll hear research presentations by our three invited guests - noteworthy PhD students from other universities whose research is of keen interest to our computer science community. All faculty, undergrads, alumni, and graduate students from all departments are welcome to attend.

After the talks, join the speakers and our CS Department for our Founders Day reception in MLH 3 from 4:30-5:30pm (please RSVP by 3/23 if planning to attend the reception.) We'll have time to chat with the speakers, as well as enjoy ice cream sundaes.

----------

Speakers and Abstracts:

The following three PhD students will give presentations:

--------------

Cayden Codel, PhD student, Carnegie Mellon University

Title: Making SAT Solvers More Trustworthy

Abstract:

Boolean satisfiability (SAT) solvers are powerful automated reasoning tools. They have been used to resolve open questions in mathematics, they form the core of SMT solvers, and they are the engine behind many services in industry, particularly at Amazon. One strength of modern SAT solvers is that they emit machine-checkable proofs when they solve a problem. By checking these proofs with verified software, we can gain a high degree of trust in a SAT solver's answer. However, other steps in the SAT solving process, such as formula encodings and symmetry breaking, do not have as clear of a trust story.

In this talk, I will discuss my work on the Trestle project in the Lean 4 theorem prover. Trestle provides tools for writing verified formula encodings and for engaging in interactive and automated symmetry breaking. So far, Trestle has been instrumental in several mathematical verifications, and the goal of my PhD thesis is to make Trestle the standard tool for verified SAT solving.

Bio: Cayden Codel is a fourth year PhD student at Carnegie Mellon University, advised by Marijn Heule and Jeremy Avigad. His work focuses on the synergies between interactive theorem proving and automated reasoning. In particular, he uses the Lean theorem prover to formalize SAT solving tools. He also works on practical SAT proof checking, and has assisted in a few mathematical formalizations in Lean.

-----------------

Maryam Rostamipoor, PhD student, Stonybrook University

Title: Preventing Sensitive Data Leakage in Modern Cloud-Native Platforms

Abstract:

Cloud-native environments leverage technologies such as microservices, serverless computing, and container orchestration to build scalable and resilient applications. Among these, serverless platforms and Kubernetes have emerged as two of the most widely adopted architectural paradigms. However, both introduce distinct security challenges—particularly the leakage of sensitive data such as API keys, tokens, and credentials. These risks often arise from multi-tenancy, ephemeral execution environments, excessive permissions, and insecure default configurations. Existing defenses, including software-only sandboxing in serverless platforms and external Key Management Services (KMS) in Kubernetes, remain inadequate. They are vulnerable to memory disclosure and transient execution attacks and provide limited protection against misconfigurations or overly permissive access controls.

This talk examines the problem of sensitive data leakage in serverless and Kubernetes-based environments and proposes system-level mechanisms to mitigate these threats under widely accepted threat models. First, LeakLess applies selective in-memory encryption combined with a confined I/O module to ensure that leaked data remains cryptographically protected, preventing exploitation even under memory-disclosure or transient-execution attacks while incurring minimal performance overhead. Second, KubeKeeper introduces fine-grained encryption and pod-level decryption authorization for Kubernetes Secrets, eliminating excessive-permission exposures without degrading runtime performance. Together, these contributions provide practical, system-level defenses against data leakage in modern cloud-native systems, mitigating information exposure in serverless platforms and access-control risks in Kubernetes environments.

Bio: Maryam Rostamipoor is a PhD candidate in the Department of Computer Science at Stony Brook University, where she is advised by Professor Michalis Polychronakis. Expected to graduate in 2026, her research focuses on protecting sensitive data in modern cloud-native environments, with an emphasis on practical system-level defenses for serverless and container orchestration platforms.

She has developed innovative systems such as LeakLess and KubeKeeper to mitigate memory-disclosure and excessive-permission risks, with her work appearing in top-tier security venues including NDSS and IEEE EuroS&P. Her contributions have been recognized with the NDSS Fellowship and the Catacosinos Fellowship for Excellence in Computer Science. Maryam combines strong engineering insight with practical deployment considerations to address critical security challenges in modern cloud infrastructure.

----------------

Yash Vekaria, PhD student, University of California, Davis

Title: Towards Systematic Black-Box Audits of Opaque Data-Driven Personalization Ecosystems

Abstract:

Modern digital ecosystems continuously observe and model users through complex pipelines that involve data collection, sharing, and its downstream use for profiling and personalization. However, users are often unaware of what data is collected about them, who it is shared with, what profiles are inferred, or how these profiles influence downstream decisions such as personalized advertisements, recommendations, or AI responses. These opaque data practices enable the aggregation and reuse of user's personal data across multiple contexts, creating privacy risks for the end users. The mechanisms governing these data flows are often black-box, making it inherently challenging to understand and quantify the underlying privacy risks.

In this talk, I will present my research on large-scale, black-box auditing techniques to systematically uncover opaque data flows on the web and understand downstream consumer harms related to data collection, sharing and use. First, I will describe my work on reverse-engineering tracking technologies employed by websites to study "data collection" in digital ecosystems. Second, I will discuss the user-centric approach at understanding downstream "data sharing" harms from brokerage and marketing. Finally, I will cover privacy risks associated with "data use" in modern AI-based ecosystems through my research on persona-guided audits of personalization in GenAI assistants.

Bio: Yash Vekaria is a PhD candidate in the Department of Computer Science at University of California, Davis, where he is advised by Professor Zubair Shafiq. Broadly, his interests lie in privacy, security, and technology policy across modern digital ecosystems. His research focuses on uncovering hidden risks emerging from opaque data practices on the web and examining its implications for consumers. At a high level, he leverages internet measurement techniques and builds novel data-driven black-box audit systems to reverse-engineer opaque data and monetary flows on the Internet. His research has been published in top-tier security and privacy venues such as IEEE S&P, USENIX, and IMC. He is a recipient of the GGCS PhD Fellowship (2024) and Georgia Tech Cybersecurity Summer Fellowship (2023). Yash's research has also been featured in various global news media such as The New York Times, The Verge, The Times, Vox, and others and has contributed to multiple lawsuits against several companies that have engaged in unlawful consumer surveillance. He has also been invited by the Federal Trade Commission (FTC) to present his research to technologists, lawyers, and policymakers.